BLTS > TBSL, the order matters

OK, yes the post heading is a bit obscure and for a specific audience; so let me explain.

Over in the MyData.org community (and other such as kantarainitiative.org) there is a concept of ‘the BLT Sandwich’. That’s explained in a bit more detail in this post, but for this purpose the BLT Sandwich, or more accurately the initials B, L, T and S represent the 4 levers that we must push, pull or mitigate to effect change in how personal data is used to empower individuals. To elaborate;

Business

What new kinds of business models are needed when developing new services based on sharing personal data across services? What needs to happen to show that ethical business can be better business?

Legal

How can different forms of legislation and regulation (and their enforcement) provide ingredients for solutions that enable the shift to a fair and sustainable digital world? How can legislation be a driver for innovation, not a blocker?

Tech

How can technology be wielded for the benefit of individuals and especially to protect human rights? How can technologies around personal data be harnessed to help the individuals, provide efficient public services – and help us reach the UN Sustainable Development Goals?

Society

How can individuals, communities and societies collectively use, share and benefit from personal data and grow towards a healthy and thriving data economy for all?

That’s all well and good, and also makes for a really nice lunch. However, the problem I’m pointing to in this post is that whilst ‘BLTS’ trips off (and onto) the tongue nicely is that the order of the letters is incorrect, it should be T B S L.

Yes, I’m being a bit flippant, but my real point is that the order in which we think about the Business, Legal, Technology and Societal changes we seek is REALLY IMPORTANT. The changes we seek won’t all happen at same time or the same pace; and if we fail to recognise the actual most likely path to empowerment then our objectives will take longer to achieve, ands follow a more winding path.

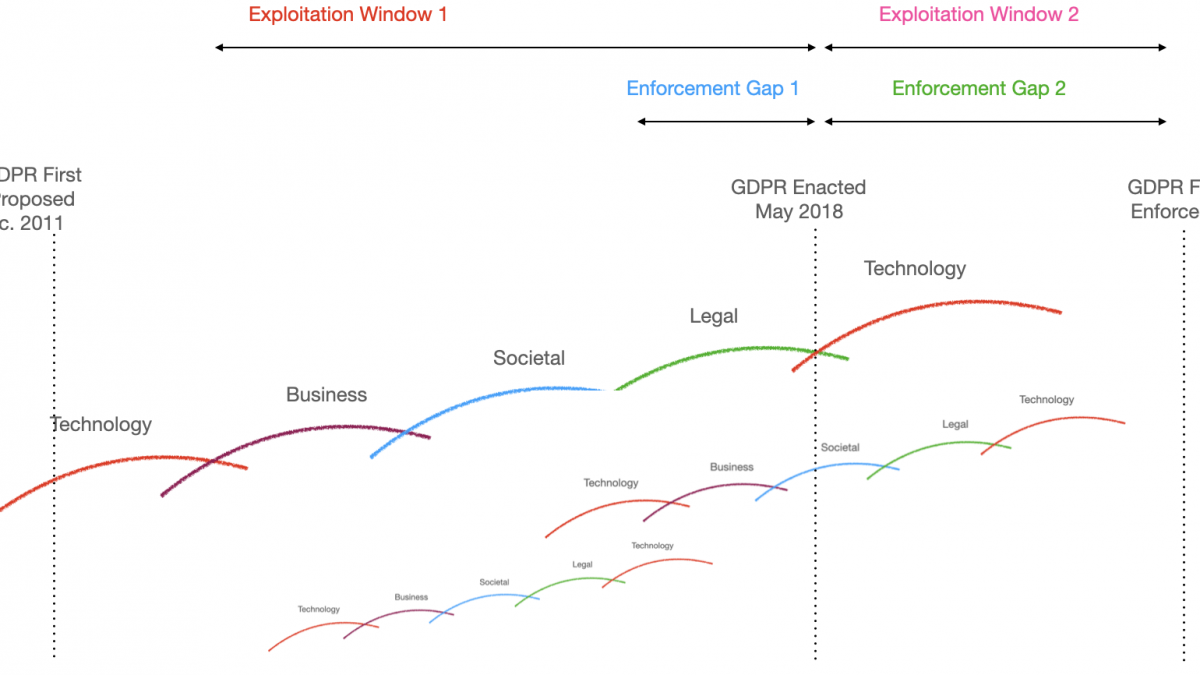

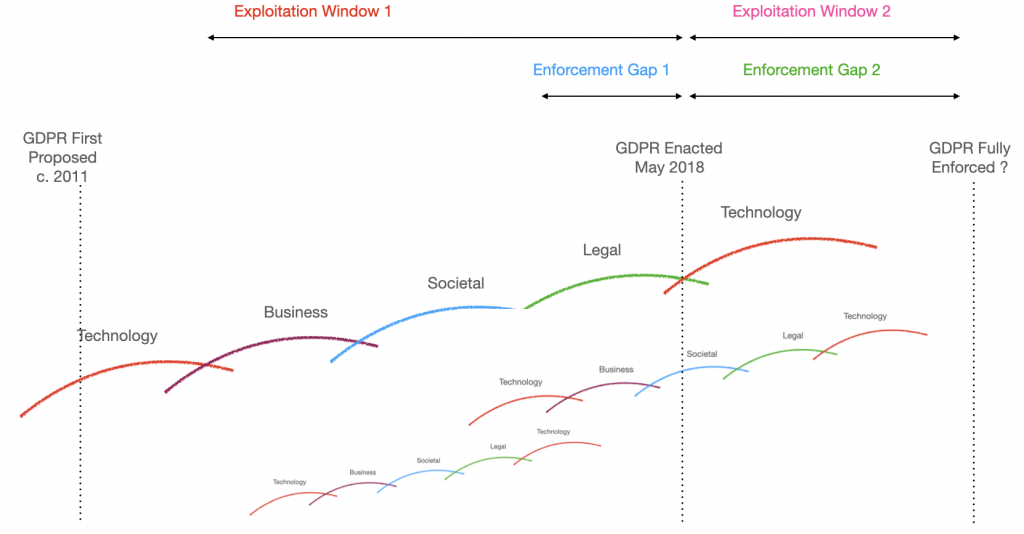

The visual below is my interpretation of how technology and data related innovation unfolds.

My suggestion is that the actual order in which evolution takes places is more akin to:

- Technology innovation – someone makes a technological breakthrough enabling something that was not possible, or is better/ lower cost than before; then,

- Businesses see how they can make/ save money from this technical innovation and launch products/ services built with it; then,

- Society adopts the products/ services based on this innovation, then,

- Legal/ regulatory processes identify any negative implications of this innovation and define ways to mitigate the issue

In this sequential mode, windows of exploitation emerge; these are the periods between the innovation and the regulation, and then with slightly differing characteristics, the window between regulation being enacted and enforced. In parallel, enforcement gaps are those that exist between a) regulation being announced and being enacted (2+ years in the case of GDPR), and then b) when regulation is enacted and enforced (a further 2+ years for multiple aspects of GDPR such as adtech or data portability).

There are many examples of this cycle, and this order of events; and note that many such cycles emerge and run in tandem. For example, one could point to technologies that are well into their business growth phase such as facial recognition, or machine learning, or gene therapy. All have massive potential upsides, but also significant potential risks or harms; one would assume that regulations that target these specific harms remain several years out from this point in time.

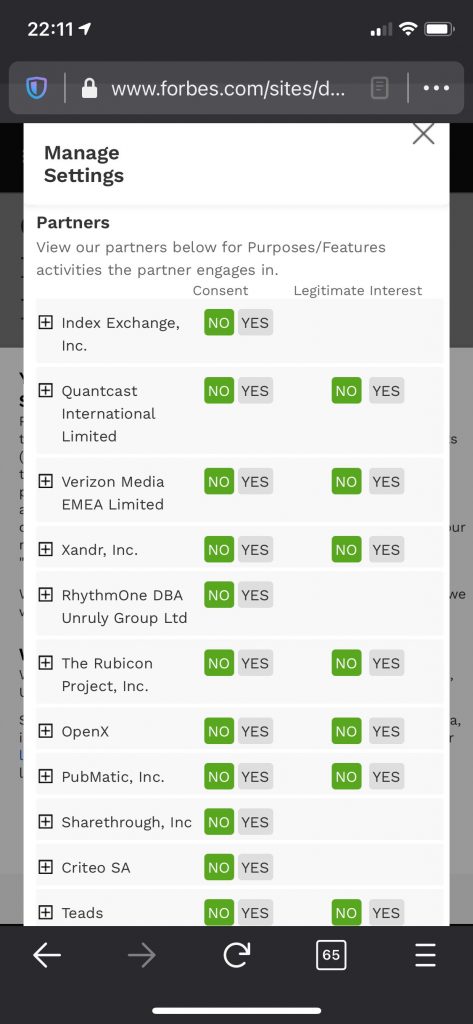

What does this TBSL process mean for our key focus on personal data empowerment? Right now it feels that this means ongoing frustration and slower progress than might otherwise be the case; it is not unreasonable to suggest that GDPR is still in the ‘wait and see’ bucket. How, for example, almost three years in to the GDPR era of live regulation, and yet individuals still have to navigate things like the IAB framework and its use of legitimate interest as a means to maintain AdTech profiles. To be specific, why should individuals have to make ten or more clicks on a web page/ app before accessing just in order to prevent something that could easily be derived from a single, automated do not track signal?

Example of the IAB Framework/ Legitimate Interest in Action

Looking to the future, there is clearly a lot of good work happening on the technology front around such decentralised identity, verified credentials and confidential data stores for people; some sign of business adoption (e.g. the 27 emerging MyData Operators). But we’ve not yet hit the societal adoption phase at any kind of scale, and thus have not yet landed firmly on the regulatory planning cycle. Data protection (the defence) is well represented obviously with GDPR, CCPA et al; but that’s not the same as proactive regulation with the objective of empowering people with their data (the offence). An illustration of this is the current work in The EU on the Data Governance Act; comments here from the MyData Operators group. Yet again we see regulation about personal data not instantiating the individual themselves as a valid, independent actor in the regulation; moving personal data around is somehow seen only from the perspective of organisations.

One can only hope that there is now enough momentum behind personal data empowerment as an objective to make that reality; but for now we should at least recognise that it will be a long time before regulation underpins this. Progress via technological innovation and then business adoption are far closer to reality.