Navigating challenges and opportunties around personal data

71+

Articles

50k

Words written

2004

Consulting Since

Recent Posts

August 5, 2019

This week I watched the excellent documentary The Great Hack. A horrifying story, very well told; congratulations to all those involved in making that and bringing […]

February 8, 2019

Just looking at the German Competition Authorities decision around Facebook and their interpretation of the use of Consent. That will have a big impact. Whilst I’m […]

November 1, 2018

I’ve not really known what to do with this site/ blog since the untimely death of my good friend and colleague John Butler. Until I do […]

January 16, 2015

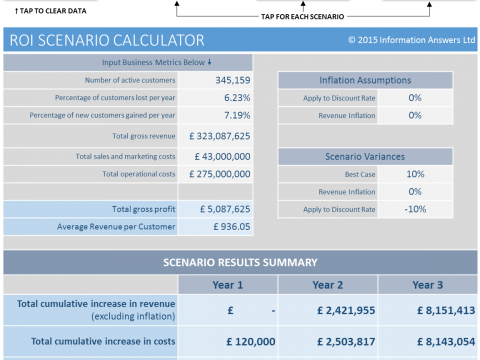

The internet of things (IoT) is generating Big Data and driving the need for business-ready analytics. But the explosion of marketing channels in which organizations are […]

October 24, 2014

By John Butler — Marketing has long yearned for a step change from being limited to a sales support function such as generating leads for sales […]

February 12, 2013

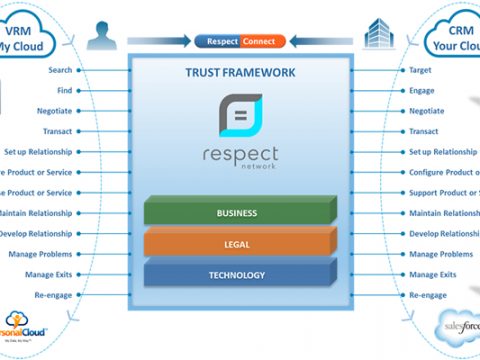

I’ve long been an advocate of Craig Burton’s thesis that ‘VRM’ and the inevitable shift towards ‘personal clouds’ will be good for the organisations. His excellent […]